Pre-execution verification for AI agents

Pre-execution verification for AI agentsYour Agents Need a Rubber Duck

Every great developer debugs by talking to a duck. Now your AI agents can too — except this duck talks back with a second opinion.

Your agents are making decisions right now. Who's double-checking their homework?

Your agents are running blind

A runaway agent loop can burn thousands in API costs overnight

Your agent's 95% accuracy sounds great until step 10 hits 60% success

You're monitoring what went wrong. Nobody's checking what's about to go wrong.

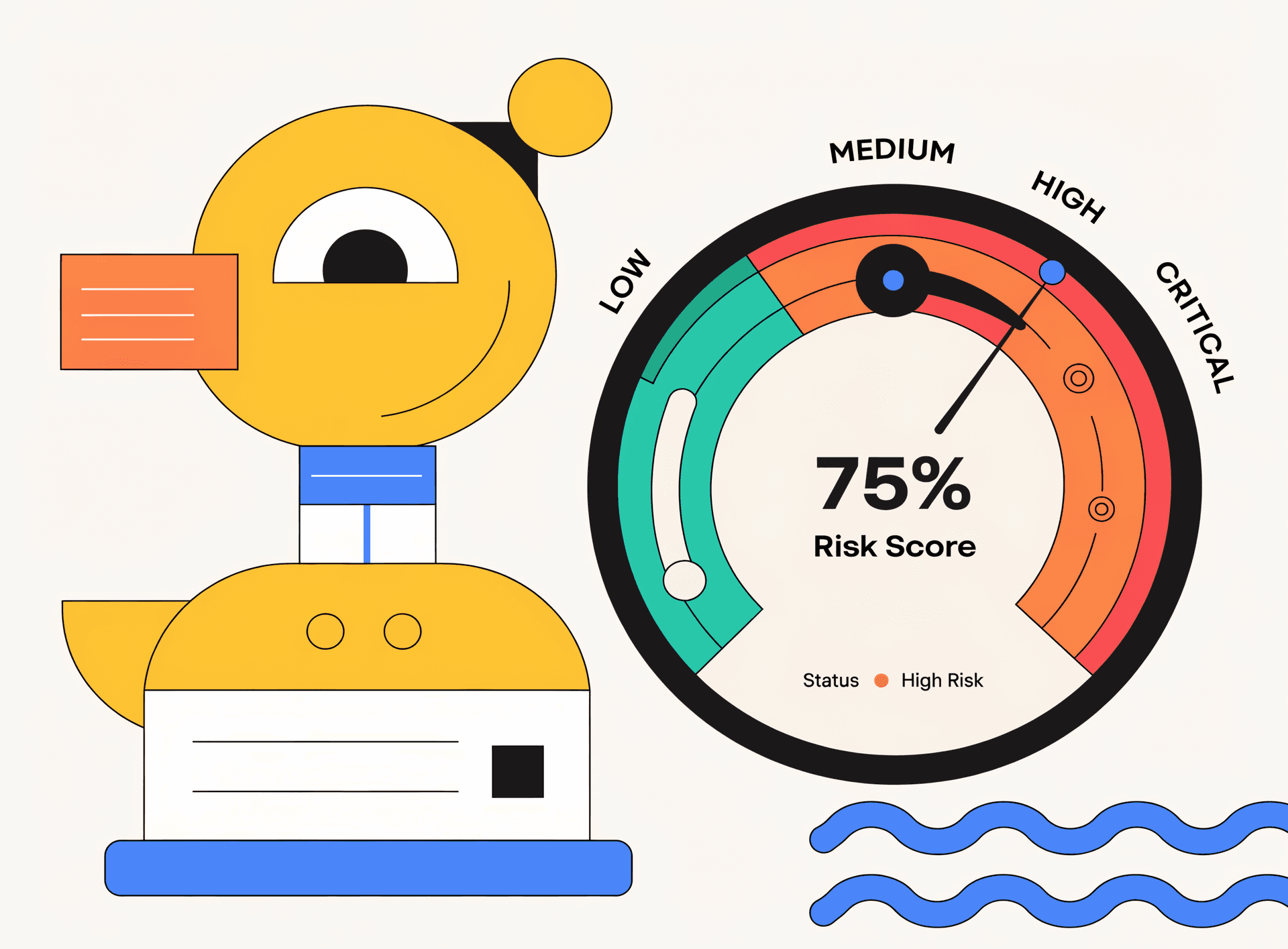

Cross-model verification closes 74.7% of quality gaps (GitHub research)

Three layers of defense

Cross-Model Verification

GPT reviews Claude. Claude reviews GPT. A second AI family catches blind spots the original model cannot see.

Loop Detection & Circuit Breaker

Detects repeating plan patterns in real-time and recommends circuit-breaker actions before costs spiral.

Risk Scores & Verdicts

Every plan gets an approve, flag, or reject verdict with a numeric risk score and actionable suggestions.

Integration

IntegrationFour steps to verified agents

Waddle in

Waddle inAdd the middleware

One import. One line of config. Works with LangChain, CrewAI, and AutoGen.

Quack check

Quack checkAgent submits plan

Before any action executes, the plan payload is sent to Rubber Duck for review.

Duck huddle

Duck huddleCross-model verdict

A different model family analyzes the plan and returns approve / flag / reject.

Smooth sailing

Smooth sailingAct with confidence

Your agent proceeds only when the plan is verified. Loops and bad plans never execute.

A second brain from a different AI family reviews every plan before execution

“Cross-model verification closes 74.7% of quality gaps (GitHub research)”